Category: machine-learning

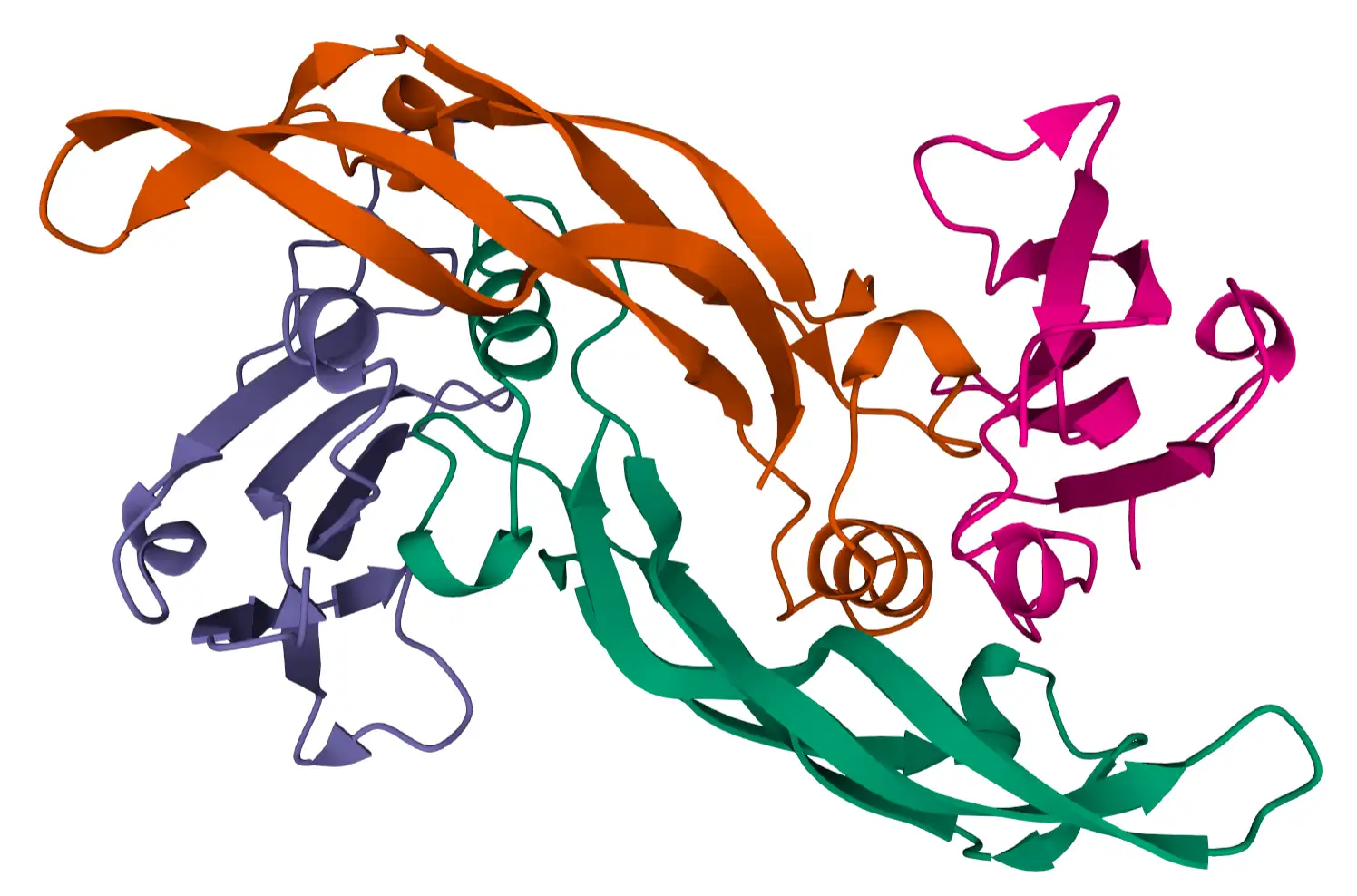

Predicting Protein Function with DeepChain 12 May 2021

Predicting Protein Function with DeepChain 12 May 2021  CRL Task 6: Causal Imitation Learning 16 Jan 2021

CRL Task 6: Causal Imitation Learning 16 Jan 2021  CRL Task 4: Generalisability and Robustness 15 Jan 2021

CRL Task 4: Generalisability and Robustness 15 Jan 2021  CRL Task 5: Learning Causal Models 15 Jan 2021

CRL Task 5: Learning Causal Models 15 Jan 2021  Preliminaries for CRL 10 Dec 2020

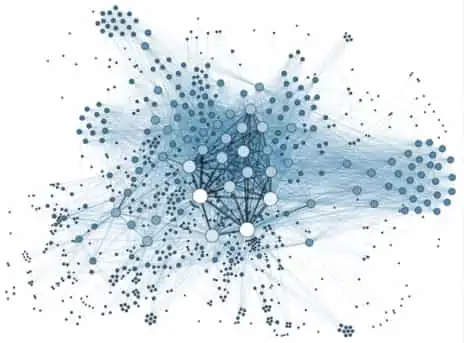

Preliminaries for CRL 10 Dec 2020  Causal Reinforcement Learning: A Primer 9 Dec 2020

Causal Reinforcement Learning: A Primer 9 Dec 2020  Interventions and Multivariate SCMs 1 Sept 2020

Interventions and Multivariate SCMs 1 Sept 2020  Causality and Machine Learning 10 Aug 2020

Causality and Machine Learning 10 Aug 2020  Learning Causal Models 9 Aug 2020

Learning Causal Models 9 Aug 2020  Uncertainty in Model Based RL 13 Jun 2020

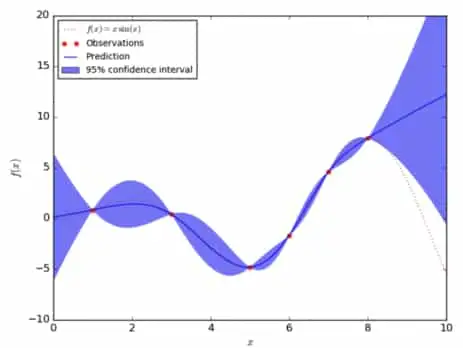

Uncertainty in Model Based RL 13 Jun 2020  Learning with a Model 4 Jun 2020

Learning with a Model 4 Jun 2020  World Models: Learning by Imagination 28 May 2020

World Models: Learning by Imagination 28 May 2020  Learning with a Policy 25 May 2020

Learning with a Policy 25 May 2020