And as quickly as that, we’re at task 6 of our quest. Causal imitation learning is perhaps the most fanciful-sounding, but at it’s core it remains as simple a goal as the challenge presented by Abbeel and Ng in 2004. The really exciting part here is that we have rigorous methods for combining our causal techniques with imitation learning procedures using RL. This is a really cutting edge research area and we only discuss one paper in detail here. Keep an eye out for exciting developments coming from left, right and centre!

Causal Imitation Learning

We now reach the final task presented by Bareinboim in his development of the causal reinforcement learning framework 1Bareinboim, E. (2020). Causal Reinforcement Learning. ICML 2020.. This task involves the interesting challenge of learning by expert demonstration - imitation learning. In their now classic paper, Abbeel and Ng 2Abbeel, P., & Ng, A. Y. (2004). Apprenticeship learning via inverse reinforcement learning. ICML. explored applying inverse reinforcement learning (IRL) techniques to the imitation learning procedure. Essentially, IRL learns a reward function that emphasises the observed expert trajectories. This is in contrast to the other common method of imitation learning known as behaviour cloning where an agent seeks to mimic the policy of the expert. Both these methods have been successful in their own right, but they make the strong assumption that actions of the expert are fully observed by the imitator. 3Zhang, J., Kumor, D., & Bareinboim, E. (2020). Causal imitation learning with unobserved confounders. addresses some of these shortcomings by introducing a complete graphical criterion for determining whether imitation learning is feasible given observational data and knowledge about the underlying causal process. Further, a sufficient algorithm for identifying an imitation policy when this criterion does not hold is presented.

Partially Observable SCM 3Zhang, J., Kumor, D., & Bareinboim, E. (2020). Causal imitation learning with unobserved confounders.: A POSCM is a tuple . Here is an SCM, represents the observed endogenous variables, and represents the latent (unobserved) endogenous variables. The observed and latent variables are mutually exhaustive over the endogenous variables.

The task at hand is to determine the value of performing some intervention (action) that is part of the observed variable set, . Assuming the reward is latent, we wish to identify a policy such that the expected reward exceeds a certain minimum performance requirement, . We say is identifiable if for a subset of the exogenous variables, , the distribution is uniquely computable from the observation distribution and POSCM, . In other words, if we can identify the outcome from the observations of the expert behaviour in an imitation learning context. In fact, when the reward is latent (not all are observed), we cannot identify (see corollary 1 in 3Zhang, J., Kumor, D., & Bareinboim, E. (2020). Causal imitation learning with unobserved confounders.). We thus need more information to learn an effective imitation policy.

By assuming that the observed actions are demonstrated by an expert (exceeds a threshold), we relax the need to worry about non-identifiability issues. Further, we say a reward distribution is imitable if there exists some policy in the policy space that can identify the distribution for some POSCM. For example, consider with policy . Then the interventional distribution is

In other words, in this simple case the unobserved reward distribution is imitable purely by observing realisations of action . Importantly, as previously discussed, the reward distribution remains unidentifiable. That is, imitability does not guarantee identifiability of the imitators reward distribution. In fact, Zhang et al. further show that if an expert and imitator share the policy space (possible actions), the the policy itself is always imitable. When the policy spaces do not agree, the criteria become more complicated. We now explore some graphical criteria for this case.

Imitation Backdoor 3Zhang, J., Kumor, D., & Bareinboim, E. (2020). Causal imitation learning with unobserved confounders.: Consider a causal system with diagram and policy space . We say satisfies the imitation backdoor criterion (i-backdoor) with respect to if and only if the is a subset of the parents of and is conditionally independent of given in the graph with edges out of removed. Formally, and

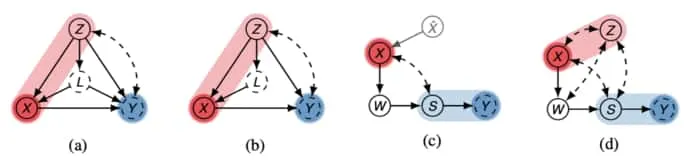

For an example of how this definition applies, consider the figure below. Specifically, (a) with set . Here we have is in the parents of the policy space (inclusive of the space itself). Also, removing edge and conditioning on still leaves path as a ‘backdoor’. In figure (b), however, the edge is removed and we no longer have an i-backdoor set.

Causal diagrams showing examples where imitation learning can or cannot occur. Blue variables indicate latent reward variable, while red variables represent action. Light red indicates the inputs to the policy space, and light blue represents the minimal imitation surrogates. Figure extracted from 3Zhang, J., Kumor, D., & Bareinboim, E. (2020). Causal imitation learning with unobserved confounders..

The i-backdoor criterion is used to characterise when imitation of an expert is possible given that the reward variable is unobserved.

Imitation by Backdoor 3Zhang, J., Kumor, D., & Bareinboim, E. (2020). Causal imitation learning with unobserved confounders.: Given a causal diagram with policy space , we say that the distribution is imitable with respect to if and only if there exists some i-backdoor admissible set in this causal system. Further, the policy itself can be determined and is given by .

Applying this theorem, we can see that using set in the earlier figure (b), an imitating policy is learnable. Further, the policy itself is given by These results are impressive, but Zhang et al. further point out that the i-backdoor requirement is not necessary for imitation of expert performance. Consider the earlier figure (c) in which variable mediates all actions (intervention on ) on the outcome (latent reward ). We could imagine that learning a distribution over could be sufficient for imitation of the distribution of in this case. This train of thought motivates the following definitions and formalism’s.

Imitation Surrogate 4Kocaoglu, M., Shanmugam, K., & Bareinboim, E. (2017). Experimental design for learning causal graphs with latent variables. NIPS.: Consider a causal diagram with policy space . Take as an arbitrary subset of the observations, . We say is an imitation surrogate (i-surrogate) with respect to if . Here is the graph obtained by adding directed edges from to . is a new parent of that has been added in this procedure.

We say that the imitation surrogate is minimal if there is no subset of it such that the subset is also an imitation surrogate. A simple example of this is visible in figure (c) where both and are surrogates, but is the minimal surrogate. Figure (d) poses an additional problem in that the addition of the collider to (c) means is not identifiable even though we have an i-surrogate . The authors tackle this problem by realising that having a subspace of the policy space that yields an identifiable distribution is sufficient to solve the imitation learning task. Without delving into the details here, the authors of 3Zhang, J., Kumor, D., & Bareinboim, E. (2020). Causal imitation learning with unobserved confounders. implement a confounding robust imitation learning algorithm and apply it to several interesting problems in which the causal approach is shown to be superior to naive imitation learning approaches.

This completes the discussion about this task. I expected causal imitation learning to become an active area of research as this paper was only recently released, being the first of its kind. This also concludes the development of the theory necessary for engaging with state-of-the-art research in causal reinforcement learning.

Image credit: Header Image.

References

- Bareinboim, E. (2020). Causal Reinforcement Learning. ICML 2020.

- Abbeel, P., & Ng, A. Y. (2004). Apprenticeship learning via inverse reinforcement learning. ICML.

- Zhang, J., Kumor, D., & Bareinboim, E. (2020). Causal imitation learning with unobserved confounders.

- Kocaoglu, M., Shanmugam, K., & Bareinboim, E. (2017). Experimental design for learning causal graphs with latent variables. NIPS.

Comments are being migrated. Check back soon.