In the last dicussion we sought to rigorously define counterfactual statements and distributions in terms of our DAG formalism of causal inference. This appeared fruitful but the theory is certainly incomplete at this point. Thinking in terms of Markov properties gave us a way to infer independence relations from graph structure. Ideally we would like to formulate a similar set of rules to infer dependencies from the graphs. We now consider these conditions.

This Series

- A Causal Perspective

- Causal Models

- Learning Causal Models

- Causality and Machine Learning

- Interventions and Multivariate SCMs

- Reaching Rung 3: Counterfactual Reasoning

- Faithfulness

- The Do Calculus

Faithfulness

Faithfulness and causal minimality: Consider a distribution \(P_X\) and a DAG \(G\). We say

-

$P_X$ is faithful to $G$ if

\[A \perp B \mid C \implies A \perp_G B \mid C\]for all disjoint vertex sets $A,B,C.$

-

A distribution satisfies causal minimaliy with respect to $G$ if it is Markovian with respect to $G$, but not to any proper subgraph of $G$.

In fact, if $G$ is Markovian then (1) implies (2). In other words, given a faithful Markov DAG, we are assured it satisfies the causal minimality property. Note, the implication does not hold both ways.

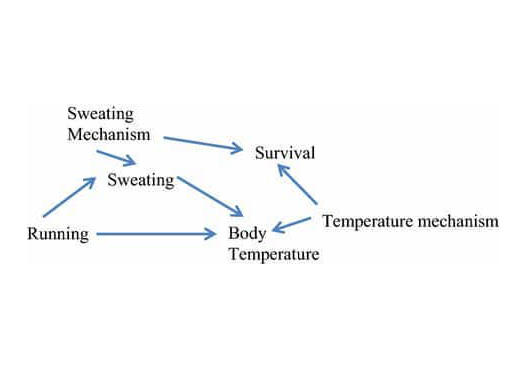

Faithfulness is an interesting - perhaps counterintuitive - property. Thinking about it as a property that ensures linear relationships do not exactly cancel each other out clarifies things. Consider a graph with $X \rightarrow Z$ and $X \rightarrow Y \rightarrow Z$. If the effects of these two paths perfectly cancel each other out, we get conditional independence of $X$ and $Z$ despite having $X \rightarrow Z$.

Resources

This series of articles is largely based on the great work by Jonas Peters, among others:

St John

St John